Editor’s Note: MIT’s McAfee will keynote our Sept. 28 CEO Talent Summit (hosted virtually) along with former Medtronic CEO Bill George and Verizon CHRO Samantha Hammock. It’ll be a great session to help you develop the workforce strategy you need for the unpredictable year—and years—ahead. Join Us! More Information >

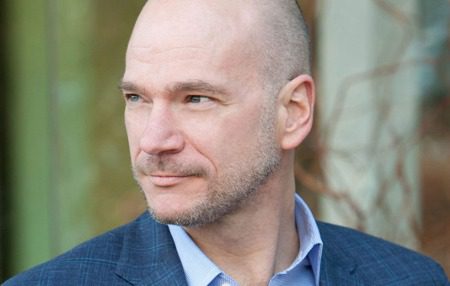

The last time I talked with Andrew McAfee, a cofounder and codirector of the MIT Initiative on the Digital Economy at the MIT Sloan School of Management, it was just before Covid, we didn’t once discuss Covid. Which is ironic, given that he’s a futurist and we were talking about the future of work.

But while McAfee, author of the bestsellers More From Less, Machine, Platform Crowd and The Second Machine Age, may not predicted that the whole world would be shut down by a virus just months after our conversation, upending workplace norms developed over a century, our discussion did nail a lot of other issues which have come to pass, many of which are being accelerated by the bull-rush of technology change during the last three years.

Tops among them: The explosive growth of artificial intelligence in all aspects of our lives. It may not be transparent how it’s working, or when it’s working, but it’s there in a dizzying number of interactions between customers and suppliers, bosses and employees—even artists and their audiences.

While so much of the coverage around technology and the workplace focuses on doomsday scenarios, with evil capitalists using the power of machines to inexpensively displace millions of workers, McAfee—based on a lot of consulting inside of actual, real-live companies—sees things very differently. He sees a huge, under-discussed opportunity to help humans do work meant for humans, and more, the chance for companies to truly understand the potential and needs of all of their workers and expand their skills, grow their pay and nourish their career aspirations with an unmatched level of detail.

As an example, he worked with a big e-tailer recently to map the underlying skills in key jobs in their workplace, and then developed algorithms to scan for people at other companies with very different jobs and titles that share the same skill sets, unearthing hidden candidates hiding in plain sight.

This kind of effort and others, scaled and unleashed across all of business, will prove a boon for employers—and workers. “You’ll increase their wages, you’ll give them a path that feels better to them,” he says. The following conversation was edited for length and clarity:

So often what we hear about the interface between technology and the workforce is very, very negative. That is, we’re going to put tens of thousands of people out of jobs with AI and we’re keylogging people and we’re violating privacy, and Gen Z and millennials, they’re just not going to stand for it. But you say we’re really underselling and underestimating the potential upside of all the technology and how it can help the workforce and help business do things better together, right?

I’m completely saying that. Measurement is not the enemy on either side of that social contract, right? Because the better I understand what you are actually good at, the better I can make use of those skills. And if I combine that with some information about where you might want to take your career or your next job, fantastic. Am I demonstrably underpaying you? Yeah, in some cases, I absolutely am. We only know that by measuring things pretty well and being able to put that information in a digestible form.

So, if measurement is intrusive, or surreptitious, or inappropriate for what we’re trying to accomplish, then there are problems with measurement. But the idea that measurement of what people do on the job is somehow bad, that doesn’t make sense for a minute.

So, what’s the gap? Where are we, and where do we need to get to quickly for technology to play that better, more useful role in commerce when it comes to the talent in the workforce?

We need to build up our capabilities to handle people’s professional information, to get them in the same order of magnitude of refinement as people’s social information. So, the social Web 2.0 is what? Fifteen years old now? Holy cow, are we good at assessing all the things you do on platforms, all the things I do on platforms, all of our clicks, all of that to service very tailored ads. That is a huge, well-developed industry. We can argue ethics. We can argue pros and cons. That is a big very, very refined industry.

We need to get as good with our professional activities as we do with our personal activities at the task of measurement for the very simple reason that it’s very hard to manage what you can only measure poorly. The better we get at measuring things like your skills and your trajectory and where you want to take your next job and your career, the better we get at measurement, the better we can help you with that.

The thing I don’t like about a lot of the current [media] coverage is it hearkens back to this robber baron era of thinking about business where there are just evil bosses trying to exploit helpless employees. Man, that’s a tired narrative. That doesn’t really work anymore. I guarantee you every company that I’ve worked with really wants its talent to not walk out the door and not be unhappy and not quit.

So, they want to know who those people are, why they’re being underpaid, what is the right market wage for the skills that they have, taking into account local conditions, taking into account inflation. All these different things. Just a simple question like, what’s the right wage to pay you to minimize the chances you’re going to walk out the door because you’re unhappy with your salary? That’s a very simple question. If we can help companies answer that, both sides will be happier.

So if we get this right, where are the sunny uplands here? Where are we going if we start to get this stuff? Where could we be going?

Here is an easy step: When I talk to companies about their merit cycle process, what we used to call performance reviews or bonuses, the instant I ask an executive or business leader about it, they do some combination of hitting their head against the nearest hard object and curling up into a fetal position because they’re like, “Look, it’s this long drawn-out process. It kind of sucks. It takes a long time.” Who are we most at risk of losing? Who’s about to walk out the door here? We don’t really know. We don’t really know what the fair wage is to pay them.

So, we wind up in this long series of meetings where there’s some poor person trying to manage an Excel spreadsheet capturing the results of the discussion, where we take the merit pool and try to allocate it intelligently across a group of people. That’s a bummer of a process now.

One thing we can do pretty quickly [with technology] is remove a huge amount of the headache there and say, “Okay, you got $x mil of merit pool to allocate across all the people in the marketing department.” Boom. Here’s the first pass at that. Taking into account everything we know about job titles and wages and prevailing wages in regions and things like that, boom, here’s a plan. We’ve just given you a plan.

Now, you can tweak it. Now we got to give Dan more for all these reasons we can talk about. We got to bump him up a little bit. Great. Like, rejigger the rest of the numbers and give people a merit cycle process that is relatively painless as opposed to relatively painful and that involves a decent amount of data and a decent amount of optimization as opposed to a huge amount of kind of hand-waving and guesswork. That’s what we can do.

That’s a beachhead to doing all kinds of other things that way. What’s the training plan? What’s the upskilling path? How do I, as a boss, feel like I’ve got a decent cockpit or a decent dashboard for the people in my organization as opposed to what I have now, which is kind of a list of headcount by department? Let’s get a lot better than that, right?

If you’re going to build a cutting-edge factory today, every machine in that factory is instrumented and you have a very, very precise dashboard of how well the machines in your factory are doing. The idea that we’re many, many years behind that with the people, that doesn’t make a ton of sense to me. That’s what we need to fix.

Of course, there’s the potential here for that Orwellian future, taking on the other side. Look at what China does with social credit scores. How are we not falling into that when you talk about the things you’re talking about here?

It’s a justifiable thing to worry about. What’s going to keep us from tipping into one or the other is, at a high level, it’s our values as a society, and then the laws and institutions that come down from that. We have decided as a liberal democracy that we do not want to be an authoritarian state. We have this 200-plus year tradition of thinking a lot and then trying to carefully limit the powers of government, for example. So, I ain’t voting for anybody who thinks the social credit score is a good idea. And I’m pretty sure that that would get struck down by the courts as they’re currently configured for a long time to come in the same way that the cops can’t just, you know, beat down your door without a search warrant, right?

On the corporate side, the other big force that we have is competition, is the fact that you can take your human capital and go elsewhere with it, which is the huge advantage that a market-based system has over a centrally planned economy, right?

The single biggest thing is to give people skills, valuable skills that they can then go take elsewhere if their current situation seems really Orwellian to them. Now, I can imagine layering onto that some requirements that, for example, your employer has to make it clear to you what kinds of things they’re monitoring and which ones they aren’t. I don’t know if that’s the current state of the law. I can imagine reasons why that would be a good idea. It’s the kind of thing we need to think about.

I always believe that sunlight is the best disinfectant, and if you or I take a job and the employer is like, “Look, here’s what we’re going to do, and if we change that, we are going to tell you about it.” Good. I believe that adults, once you give them information, they tend to make informed decisions.

There’s this deep split in how we think about people in this technologically sophisticated environment. One is, to my eyes, that they’re kind of clueless and helpless and we must make their choices for them. We must say, for example, you can’t keep user information or you can’t use AI to place people in a job or to admit them to school.

I find that a little paternalistic. I find that, in some ways, a lot paternalistic, this idea that, for example, people aren’t aware of what Facebook is doing. I really think people are aware of what Facebook is doing and how they make their money. I don’t think that’s one of the great mysteries of our time. And the fact that Facebook has billions of users, to me, means that people are kind of aware of the contract and they’re signing up for it.

Now again, do we need transparency? Do we need clarity? Yeah, I think we can do with some more of that. But I’m in the camp that believes that, especially with adults, if you are sure to give them information, we can trust them to make decisions, by and large, that where they look out for themselves and where they’re making intelligent decisions. I find that a pretty deep split, and I’m on the side that says, “Wow, I believe we can fundamentally trust people if we give them information to make decent decisions.”

It’s when they violate the trust—that’s when people can either step away from the job or step away from Facebook or step away from whatever. It’s when they feel that they’ve been misused.

Absolutely. And that can happen if the employer, for example, wasn’t clear about the monitoring, about the surveillance. But people working in an Amazon warehouse, are they aware that their activities are being tracked? I certainly think so. There can’t be any big mystery. You’re a warehouse worker at Amazon. You think they just, like, don’t care what you do for eight hours? The idea that that’s some kind of violation, I don’t love that idea.